Is Your LLM Just a Fancy Chef?

Weekly Wheaties #2603

In this newsletter:

📝 Post: Is Your LLM Just a Fancy Chef?

🗞️ In Case You Missed It: Verizon’s Network Outage

🗞️ In Case You Missed It: Apple Updates

🗞️ In Case You Missed It: Quick Takes

😎 Pick of the Week: Movie Lists

📦 Featured Product: Logitech Master Mice

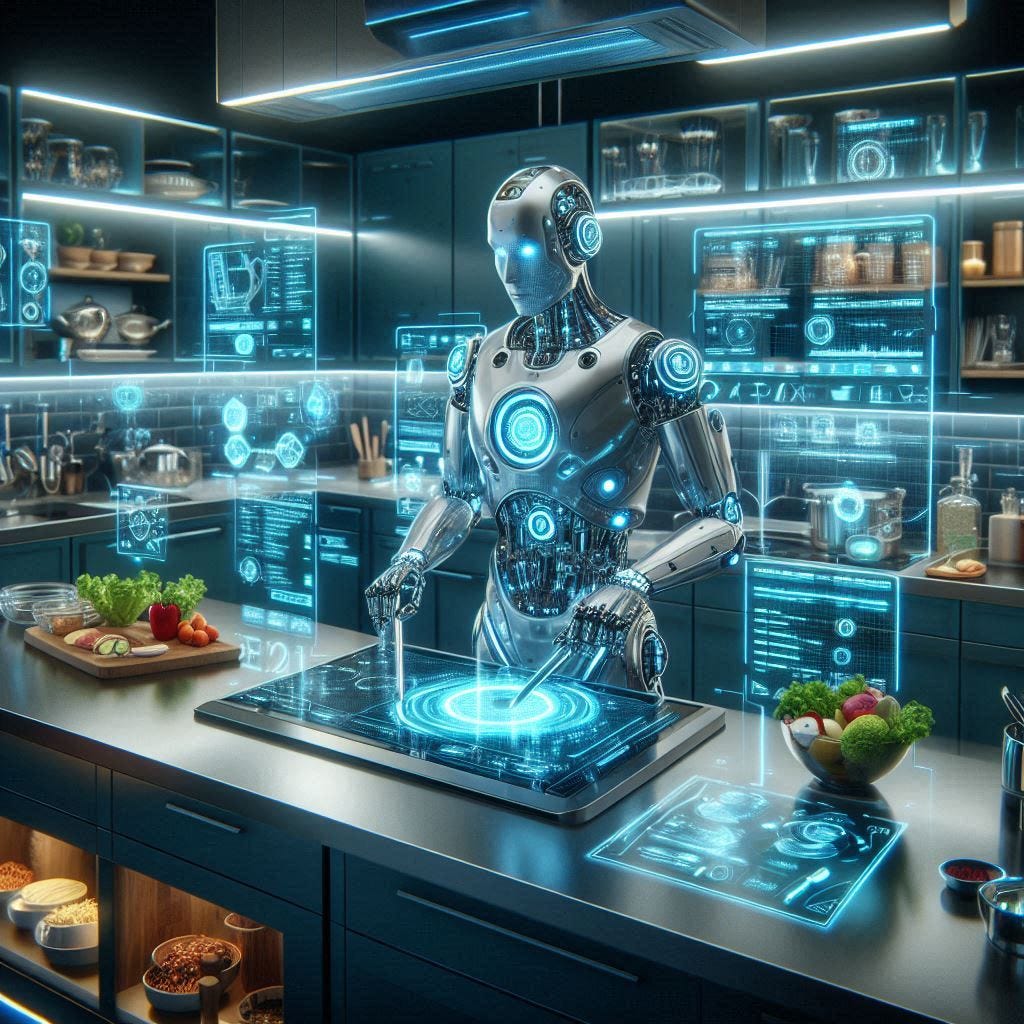

📝 Is Your LLM Just a Fancy Chef?

Last week, I dived a bit into the Machine Learning space. To break it down in order to compare it to Large Language Models (LLMs), and AI: Algorithms follow rules; Machine Learning learns patterns; and AI is creating something new based on tons of training of information.

The LLM is the backend of a chatbot - Copilot, ChatGPT, Gemini, Claude, Perplexity, to name a few. There are also many forms of LLMs based on the end goal of the company in question. This also depends on their access to information, the staff employed there, the type of coding, decisions on algorithms, and machine learning code built into the LLM itself (again, as mentioned in last week’s newsletter), and much, much more.

At a high level, an LLM is a system trained on enormous amounts of text and information. This helps it to recognize patterns in language. It is not a “computer” or “entity” that is all-knowing, as it predicts what words or information are most likely to come next based on everything it has seen before. Think of it as an extremely advanced version of autocomplete — one that works at the sentence and paragraph level.

Except here’s the kicker many people forget — the information it does in fact give as its “answer” is very much biased. It can be biased based on the user asking the question, or just based on simple demographics of where the user is located. It can even be based on previous conversations other users have had with the LLM around the same topic. The more you use “your” chatbot, the more it will know you, and the more the responses and answers will sound like you. Of course, that is also based on whether you decide to give your chatbot a personality.

With this in mind, remember the answers given by an LLM can also be factually incorrect. This includes many of the AI Overviews popping up in our search results. LLMs do not look things up like a librarian or search engine would, they are simply creating a response based on their training - which also has a cutoff date. Yes, some models are allowed to use search engines to have more recent information, but they cannot always dictate fact from fiction, as they are only looking at patterns. If you want more up-to-date and/or factual information, make sure to check out my post on **Mastering the Art of Speaking Google.**

For further context (and a little bit of fun), let’s compare LLMs to chefs. We could argue that a recipe is comparable to an algorithm, and machine learning would be the chef in question going through training (cooking a lot of various dishes, testing things out, learning what things taste like, etc).

Now, let’s consider the chef who wants to cook a meal that doesn’t have a recipe or hasn’t been cooked before. All past experiences help the chef decide what spices to add, how to cook the items in question, what works well together, and more. Each of these steps and decisions the chef makes all play into how the final dish comes together. And if my watching of Master Chef has taught me anything — giving the same recipe and items to a group of 10 or more professional chefs would lead to 10 or more completely different final products.

In comparison, having the various companies with LLMs (Copilot, Gemini, etc), would show how asking each one gives different results - even with the same exact prompt. Each [ingredient, spice, type of cooking, etc] represents the various tones, style, training data, algorithms, and every other piece of an LLM’s unique characteristics. They are not deciding what the [correct] response is, only what is best for their model. Just like a chef can’t dictate what the [correct] dish is, only what is best for their style, training, and personal preferences.

If you want to dive deeper into the world of LLMs, there are tons of resources. For starters, check out this short YouTube video diving into the math of Large Language Models from scratch. An entire online course at Stanford, CME 295, explores the world of Transformers and LLMs. Lastly, Simon Wilson’s blog shares on 2025: The year in LLMs.

Remember that LLMs aren’t trying to cook the “correct” answer, they’re cooking their answer specific to you. And just like chefs, their results depend on everything put into the model - including your past conversations. As you use LLMs more often moving forward, keep in mind how your prompting and its creation all play into the answer you receive.

🗞️ ICYMI: Verizon’s Network Outage

On Wednesday, January 14th, the Verizon Wireless mobile network went down for around 9 hours on the East Coast of the US. Other networks (even those that piggyback on Verizon’s towers) didn’t report any outages - at least at the same scale. Until more information is released, if anytime soon anyways, we can only speculate. There are some in the cybersecurity space noting this looks like it was a possible attack on the routing equipment (similar to a DDoS attack). As an individual, this shouldn’t be anything to worry about. Verizon also says it’ll credit customers $20 after its service outage. Here’s how to claim it.

Things that are out of the control of major companies like this happen all the time. The larger the company, the more it is open to attacks. We’ve also seen many of these networks go down during natural disasters or any place a large group is gathered (sporting events and concerts, for example). I would not encourage you to switch providers for one-off events like this, only if you have had consistent issues or a lack of a great connection at your home or office.

🗞️ ICYMI: Apple Updates

In a Joint statement from Google and Apple, Apple picks Google’s Gemini to run AI-powered Siri coming this year. This comes after over a year of the incoming Apple Intelligence and a “smarter Siri” that customers have been waiting for. I’d imagine we’ll hear more about this at Apple’s WWDC this summer, but there is still a bit of speculation as to what this looks like in the long term. 9to5Mac reports that there will be no Google branding on Siri, and that “Apple’s AI Deal With Google Is Temporary and Buys It Time.” For some more inside information, you can read Ming Chi Kuo’s quick take on the Apple–Google AI partnership.

Apple also introduced Apple Creator Studio, an inspiring collection of the most powerful creative apps. This combines Final Cut Pro, Logic Pro, Pixelmator Pro, Keynote, Pages, Numbers, Freeform, and some AI stock (photo and videos) for $12.99/month or $129/year. College students and educators can subscribe for $2.99 per month or $29.99 per year from the Pro Apps Bundle for Education.

🗞️ ICYMI: Quick Takes

2026 May Be the Year of the Mega I.P.O. “If SpaceX, OpenAI, and Anthropic go public, they will unleash gushers of cash for Silicon Valley and Wall Street.”

Elon Musk surprises everyone: SpaceX will attempt to reach Mars by the end of 2026.

Erdos Math Problem #397 was solved by ChatGPT 5.2 and submitted and accepted by Terence Tao. You can see a more detailed response and other open problems here.

Gmail users urged to switch off these two main features over privacy concerns.

OpenAI is reportedly asking contractors to upload real work from past jobs.

Tesla ends Full Self-Driving purchases, shifts to subscription-only.

😎 POTW: Movie Lists

Wondering where your favorite movie, actor, or skill stacks up against the rest? Check out some of these lists in the movie space:

📦 Featured Product

In the past, I’ve shared a few inexpensive wireless mice. With the most popular being the Logitech M705. However, if you want to spend a bit more and have a more comfortable experience with a few extra perks, Logitech has you covered at 3 other levels. Up first is the MX Master 2S. The scrolling is updated a bit from the M705, but it’s much more ergonomic and has a rechargeable battery. Moving up is the MX Master 3S. It adds higher quality movement, works on glass, and has a much quieter click. The top of the line MX Master 4 is the most ergonomic and performance-based mouse. Most of them are offered in a wireless or wired option and can be used on Mac or PC.